Last post was too long, covering too much terrain. Here’s a puzzle instead which whittles it all down.

What values do you store in an sRGB PNG to display a perceptually half-gray color, with an alpha of 0.5?

If you’re an absolute expert on PNG and perception and alpha, that’s all the information you need. Just in case, to make sure you don’t break any rules, here are the key bits:

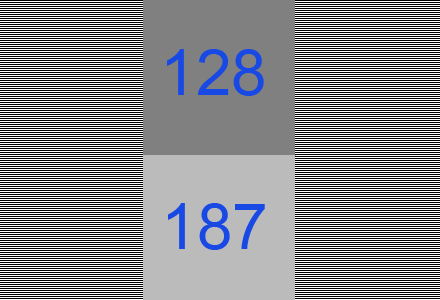

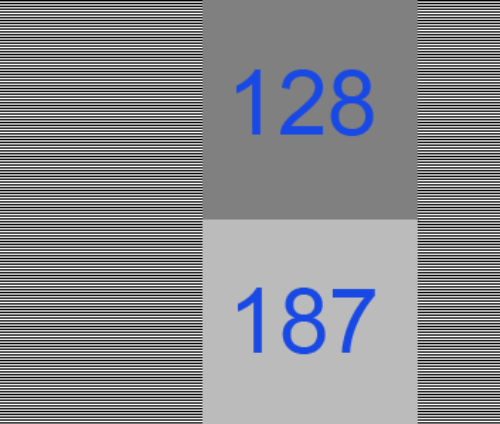

- A perceptually half-gray color on the screen is (187,187,187), not (128,128,128). See the image below to prove this to yourself, which is from John Hable’s lovely article.

- Your PNG is saving values in sRGB space. No extremely-rare gamma = 1.0 PNG for you.

- Alpha is coverage. The PNG spec notes, “The gamma value has no effect on alpha samples, which are always a linear fraction of full opacity.”

- PNG alphas are unassociated, they do not premultiply the color. To display your sRGB PNG color composited against black, you must multiply it by your unassociated alpha value.

So, what do you store in your PNG image to get a half-gray color displayed, with an alpha of 0.5? A few hints, then the answer, is after the image below.

Horizontal fully-black and fully-white lines combine to a half-gray, represented by 187. That’s sRGB in action:

Hint #1: a half-gray color with an alpha of 1.0 (fully opaque) is stored in a PNG by (187,187,187,255).

Hint #2: if a PNG could store a premultiplied color, the answer would be (187,187,187,128).

Hint #3: to turn a premultiplied color into an unassociated color, divide the color by the (fractional) alpha.

And just to have something between you and the answer, here’s this, from I wish I knew where.

The answer is (255,255,255,128), provided by Mike Chock (aka friedlinguini), who commented on my post – see the comments below. My answer was definitely wrong, so I’ll explain why this answer works.

The PNG spec notes, “This computation should be performed with intensity samples (not gamma-encoded samples)”. So, to display an sRGB-encoded PNG, you must do the following:

- Convert the sRGB color to linear space. For (255,255,255,128) this gives (1.0,1.0,1.0).

- Now multiply in the alpha, to get a linear premultiplied value. Times (128/255) -> 0.5 gives (0.5,0.5,0.5).

- Convert this value back to sRGB space and display it. This gives (187,187,187) as the color to display.

Me, I thought that PNGs with sRGB values and alphas were displayed by simply multiplying the sRGB by the stored alpha. Wrong! At least, by the spec. How could I think such a crazy thing? Because every viewer and every browser I tested showed this to be how such a PNG was displayed.

So, I’m very happy to find PNG is not broken; it’s simply that no one implements it correctly. If you do know some software that does display this image properly (your browser does not), let me know – it’ll be my example of how things should work.

Update: as usual, Jim Blinn predates my realizations by about 18 years. His article “A Ghost in a Snowstorm” (collected in the book Notation, Notation, Notation; most of this article can be found here) talks about the right way (linearization) and the errors caused by the various wrong ways of encoding alpha and sRGB. Thanks to Sean Barrett for pointing it out.

My conclusion remains the same: if you want fun puzzles and you’re near a big city, check out The Puzzled Pint, a great free social puzzle event each month.

For the record, here’s my original wrong answer:

The answer is (373,373,373,128). To display this RGBA correctly, you multiply by the alpha (and divide by 255, since the value 128 represents 0.5) to get (187,187,187).

And that’s the fatal flaw of sRGB PNGs in a nutshell: you can’t store 373 in 8 bits in a PNG. 16 bits doesn’t help: PNGs store their values as fractions in the range [0.0, 1.0].

No linearization or filtering or order of operations or any such thing involved, just a simple question. Unfortunately, PNG fails.

Wrong answers include:

- (187,187,187,128) – this would work if PNG had a premultiplied mode. It does not, so this color would be multiplied by 0.5 and displayed as (94,94,94). That said, this is a fine way to store the data if you have a closed system and no one else will ever use your PNGs.

- (187,187,187,255) – this will display correctly, but doesn’t keep the alpha around.

- (255,255,255,128) – this gives you a display value of (128,128,128) for the color, which Hable’s image shows is not a perceptual half-gray. If you used the PNG gamma chunk and set gamma to 1.0, this would work. Almost no one uses this gamma setting (it causes banding unless you use 16 bits) and it’s rarely supported by most tools.

- (255,255,255,187) – you break the PNG spec by sRGB correcting the alpha. This will actually display correctly, (187,187,187). If you composite this image over some other image with an alpha, this wrong alpha fails.

- (255,255,255,187) again – you decide to “remember” the alpha is sRGB corrected and will uncorrect it before using it as an alpha elsewhere. If you want to break the spec, better to go with storing a premultiplied color, the first wrong answer. This fix is confusing.

- (255,255,255,128) again – you store the correct alpha, but require that you first convert the stored color from sRGB to linear before applying the alpha, then convert the color back to sRGB to display it. This will work, but it defies radiance and alpha theory, it’s convoluted, expensive, super-confusing, not how anyone implements PNG display, and not how the spec reads, as I understand it. Better to just store a premultiplied color.

I wish my conclusion was wrong, but I don’t see any solution short of adding a new chunk to the PNG spec. My preference is adding a chunk that notes the values are stored as premultiplied.

In the meantime, if you want solvable puzzles and you’re near a big city, check out The Puzzled Pint, a great free social puzzle event each month.

Addendum

Zap Andersson debated this puzzle with me on Facebook, and many thanks to him. He prefers the solution (255,255,255,128), applying the alpha “later.” To clarify, here’s how PNGs are normally interpreted (and I think this follows the spec, though I’d be happy to be proven wrong, as then PNG would still work, even if no viewer or browser I know currently implements it correctly):

To display a PNG RGBA in sRGB: you multiply the RGB color by the alpha (expressed as a fraction).

The “later” solution to display a PNG RGBA in sRGB: you convert the sRGB number stored to a linear value, you then apply the alpha, and then you convert this linear value back to sRGB for display.

I like this, as convoluted as it is, in that it makes PNG work (I really don’t want to see PNG fail). The problem with this solution is that I don’t think anyone does it this way; browsers certainly don’t.

The other interesting thing Zap points out is this interesting page, which points to this even more relevant page. My takeaway is that I shouldn’t talk about 187-gray as the perceptually average gray; 128 gray really does look perceptually more acceptable (which is often why gamma correction is justified, that human perception is non-linear along with the monitor – I forgot). This doesn’t actually change anything above, the “half-covered pixel” example should still get a display level of 187. This is confirmed by alternating full-black and full-white lines averaging out to 187, for example.