Today, AMD shipped the Radeon HD 5870, the first GPU to support the DirectX11 feature set. Most of the resources have been doubled in comparison to AMD’s previous top GPU, including two triangle rasterization units. The Tech Report has a nice writeup – to help make sense of the various counts of ALUs / “wavefronts” / cores / etc. I recommend reading the slides from Kayvon Fatahalian’s excellent presentation at SIGGRAPH this year.

Category Archives: Miscellaneous

Fundamentals of Computer Graphics, 3rd Edition

One bit of deja vu for me at SIGGRAPH this year was another book signing at the A K Peters booth. Last year’s SIGGRAPH had the signing for Real-Time Rendering; this year I was at the book signing for the third edition of Fundamentals of Computer Graphics. My presence at the signing was due to the fact that I wrote a chapter on graphics for games (this edition also has new chapters on implicit modeling, color, and visualization, as well as updates to the existing chapters). As in the case of Real-Time Rendering, I was interested in contributing to this book as a fan of the previous editions. Fundamentals is targeted as a “first graphics book” so it has a slightly different audience than Real-Time Rendering, which is meant to be the reader’s second book on the subject.

One bit of deja vu for me at SIGGRAPH this year was another book signing at the A K Peters booth. Last year’s SIGGRAPH had the signing for Real-Time Rendering; this year I was at the book signing for the third edition of Fundamentals of Computer Graphics. My presence at the signing was due to the fact that I wrote a chapter on graphics for games (this edition also has new chapters on implicit modeling, color, and visualization, as well as updates to the existing chapters). As in the case of Real-Time Rendering, I was interested in contributing to this book as a fan of the previous editions. Fundamentals is targeted as a “first graphics book” so it has a slightly different audience than Real-Time Rendering, which is meant to be the reader’s second book on the subject.

At the A K Peters booth I also got to try out the Kindle edition of Fundamentals (the illustrations in Real-Time Rendering rely on color to convey information, so a Kindle edition will have to wait for color devices). I haven’t jumped on the Kindle bandwagon personally (the DRM bothers me; when I buy something I like to own it), but I know people who are quite pleased with their Kindle (or iPhone Kindle application).

HPG and SIGGRAPH: pix and links

Some seven links to keep you busy while we digest HPG and SIGGRAPH:

- Pictures of HPG and SIGGRAPH – even though just about everyone at these conferences has a camera in some form on them, we just about never take pictures. I decided to try to photograph anyone I recognized this year.

- Tim Sweeney’s HPG keynote slides – I didn’t attend the keynote, unfortunately, but heard about it. Main takeaway for me is that programming these highly parallel machines is hard, and the more that IHVs can do to ease the burden and remove limitations the more successful they will be.

- While waiting for our HPG reports, read Steve Worley’s.

- The course notes for “Advances in Real-Time Rendering in 3D Graphics and Games” will be up in a few weeks, if not sooner. Crytek’s presentation is available at their website.

- The “Beyond Programmable Shading” course notes are available now. I particularly liked Johan Andersson’s talk, partially for the sheer complexity of it all. The various factors that affect making a game engine fast are a bit mind-boggling.

- The place to go for interactive ray tracing development information is the ompf.org forum.

- This was the first year ever that I didn’t attend the Electronic Theater. Well, I did attend the first half-hour (live real-time demos), but then found myself looking at my watch as colorful but meaningless things occurred on the screen. I think the fact that we could attend the E.T. without needing a ticket meant that I could keep putting it off and also wouldn’t feel I lost anything if I missed it. If SIGGRAPH had issued me a ticket for a specific night, I suspect I would have willingly stayed for all of it, not wanting to lose the value of the ticket. Psychology. All that said, the best colorful but meaningless real-time demo I saw was “DT4 Identity SA“, freeware which runs on a Mac and is quite charming.

HPG 2009 – a closer look

When discussing things to do and see at SIGGRAPH, it is important to note the co-located conferences. This year, SIGGRAPH is co-located with the Eurographics Symposium on Sketch-Based Interfaces and Modeling (SBIM), the Symposium on Computer Animation (SCA), Non-Photorealistic Animation and Rendering (NPAR), and High-Performance Graphics (HPG). SCA has had good animation papers over the years, and is of interest to many game graphics programmers. NPAR is also a good conference for anyone interested in stylized rendering. In this post I will focus on HPG, which is a new conference formed out of the merger of the venerable Graphics Hardware conference, and the newcomer Symposium on Interactive Ray Tracing.

HPG is a three-day conference; the first two days are just before SIGGRAPH, and the third overlaps the first day of SIGGRAPH (unfortunately conflicting with the excellent SIGGRAPH course, Advances in Real-Time Rendering in 3D Graphics and Games).

HPG has managed to line up two pretty amazing keynotes. The first one is by Larry Gritz on film production rendering. Larry is a legend in the field; he was with Pixar from the Toy Story days, and co-wrote one of the most well-regarded books on Renderman. He since worked on several important renderers (BMRT, Gelato), and is now at Sony Pictures Imageworks. The second keynote is by Tim Sweeney, on the future of GPUs. As the outspoken chief architect of Epic’s Unreal Engine, Tim should need no introduction.

At the end of the conference, the two keynote speakers are joined by Steve Parker (NVIDIA) and Aaron Lefohn (Intel) for a panel on high-performance graphics in 7 year’s time.

HPG also has posters and “Hot 3D” systems presentations (hardware manufacturers talking about their latest designs). Inexplicably, although the acceptance deadline for both has long since passed, the content of neither of these is listed on the conference website yet.

I briefly discussed HPG papers in a previous post, but then only paper titles were available, making it hard to judge relevance; now many of the papers have preprints linked from Ke-Sen Huang‘s HPG 2009 papers page.

Some of the papers look relevant to current or near-future work. There are two interesting antialiasing papers: Morphological Antialiasing was covered by Eric in a recent post. The other antialiasing paper (A Directionally Adaptive Edge Anti-Aliasing Filter) does not have a preprint, but the title is promising. It is notable that one of the authors on this paper (Jason Yang) is listed as a speaker at the SIGGRAPH Advances in Real-Time Rendering in 3D Graphics and Games course; perhaps he will discuss the paper there. Although the NVIDIA paper Image Space Gathering has no preprint (yet), some information on this technique was disclosed at GDC: it involves rendering reflections and shadows into 2D buffers and then performing cross bilateral filters to mimic glossy reflections and soft shadows. I have seen similar techniques used in games, so it will be interesting to hear NVIDIA’s take on this concept. Another promising paper title: Scaling of 3D Game Engine Workloads on Modern Multi-GPU Systems.

The paper Parallel View-Dependent Tessellation of Catmull-Clark Subdivision Surfaces deals with tessellation using GPGPU methods rather than the DX11 tessellation pipeline; I’m not an expert in this area so it’s hard for me to judge, but it might be of interest for people working in this field.

I’m a bit skeptical of depth peeling techniques in general, but recent work in this area has shown some promise. The paper Bucket Depth Peeling lacks a preprint at this moment, but I look forward to learning more about it at the conference.

I found the title Data-Parallel Rasterization of Micropolygons With Defocus and Motion Blur promising because I am interested in the REYES micropolygon algorithm, and particularly in the way it handles defocus and motion blur effects. The technique presented in this paper appears to be less efficient than the REYES method, except for cases with very high velocity and/or defocus. The paper presents a GPU-efficient version of the REYES algorithm as well as an alternative algorithm which is faster in some cases. One of the authors has a blog post that gives some interesting context for the paper.

The amount of actual graphics hardware papers at the Graphics Hardware conference has been declining for years, which is probably one of the factors that precipitated the conference merger with IRT. This year there is only one paper which is clearly about hardware design: PFU: Programmable Filtering Unit for Mobile Multimedia Applications on Graphics Hardware. It has a fairly self-explanatory title, which is fortunate since it has no preprint available. Texture filtering is the last unassailable bastion of fixed-function hardware; for example, it is the only fixed-function unit in Larrabee. Programmable filtering is an intriguing concept; I look forward to the paper presentation. There is one more paper that may be about hardware (Embedded Function Composition); but the title is a bit opaque and it also has no preprint, so it is hard to be sure.

Despite my claim in the previous blog post, there do indeed appear to be quite a few papers about ray tracing this year: Efficient Ray Traced Soft Shadows using Multi-Frusta Traversal, Understanding the Efficiency of Ray Traversal on GPUs, Faster Incoherent Rays: Multi-BVH Ray Stream Tracing, Accelerating Monte Carlo Shadows Using Volumetric Occluders and Modified kd-Tree Traversal, Selective and Adaptive Supersampling for Real-Time Ray Tracing, Spatial Splits in Bounding Volume Hierarchies, Object Partitioning Considered Harmful: Space Subdivision for BVHs, and A Parallel Algorithm for Construction of Uniform Grids. Another paper, Hardware-Accelerated Global Illumination by Image Space Photon Mapping, combines image-space, GPU-accelerated methods for the initial bounce and final gather with ray-tracing for a complete photon mapping solution.

There are only three “GPGPU” papers this year; two on GPU stream compaction (copying selected elements of an array into a smaller array): Efficient Stream Compaction on Wide SIMD Many-Core Architectures and Stream Compaction for Deferred Shading, and one on computing minimum spanning trees for graphs (Fast Minimum Spanning Tree for Large Graphs on the GPU).

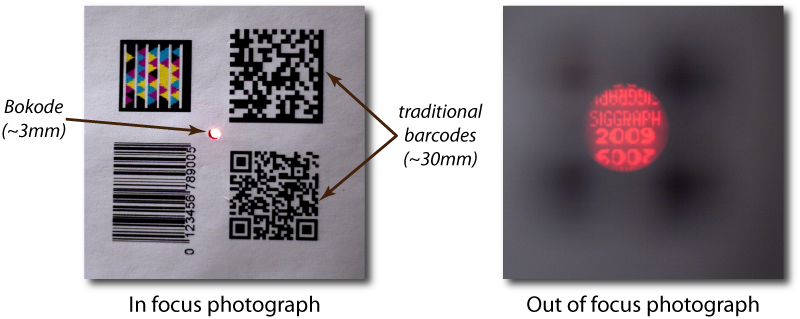

Bokode – clever!

Not directly relevant to real-time rendering (although there might be some tangentially related applications in areas like augmented reality), this SIGGRAPH 2009 paper is just painfully clever. It exploits the phenomenon of bokeh (the large circular blobs that small intense light sources generate in out-of-focus images) to create tiny barcodes that can be seen from a distance by cameras. They put a lenslet over the barcode, so that when viewed in a defocused manner you see a large circular blob – with a sharp image of the barcode in the center!

7 Things for July 26th

- Books, books, and more books: I received review copies of two books. Best of Game Programming Gems is as it sounds, certainly cheaper than buying the seven books in this series, no real review needed (look inside). Game Engine Architecture is about just that, how to make a professional-grade game rendering system, from soup to nuts (you can look inside). Eberly’s two books are the previous notable works in this area, but are quite different than this new volume. While they focus almost exclusively on algorithms, this book attempts to cover the whole task of developing an engine: what to use for source control, dealing with memory management and in-game profiling, input devices, SIMD, and many other practical topics. There is also algorithmic coverage of rendering, animation, collision detection and physics, among other areas. Naturally, the amount of information on each area is limited by page count (the book’s a solid 860 pages), but in my brief skim it looks like most of the critical areas and concepts are touched on. You won’t become an expert in any one area from this volume, but it looks like you’ll have some reasonably deep understanding of the elements that go into making a game engine. Quite an impressive work, and I know of nothing else in this area that is so detailed. I hope I get a chance to read it (who am I fooling? Though I do wish I had the time…) – well, at the least, it’s a place I’ll first go if I want to learn about a topic in game development that I know little about. If you’d rather wax nostalgic about great game engines you have known, as well as what the state of the are is, this article is for you (oh, yeah, the author of this new book works at the company that made #3).

- Looking around for titles I’d like to look over at SIGGRAPH, I found these: Game Graphics Programming, Programming the Cell Processor: For Games, Graphics, and Computation, Introduction to 3D Game Programming with DirectX 10, Ultimate Game Programming with DirectX, 2nd Ed., Advanced Game Programming, Game Coding Complete. Which all sort of sound the same (except for the Cell book), but I’d be happy to page through each and see if it looks promising.

- There’s a worthwhile comparison of average vertex normal computation methods on the MeshLab blog. He gives the nod to Thürmer and Wüthrich’s method. You can try each of the three using MeshLab itself.

- Sure, Spore didn’t light the world on fire as many of us hoped, but a lot of cool technology was explored. Chris Hecker has a worthwhile rundown of some of the great stuff they worked on.

- There are some surprisingly affordable 3D stereolithography objects available on Shapeways. I bought Spiral Cage (tiny, but impressive, and so cheap), Clematis (looks delicate, but is quite springy), and Gyroid (pricier, but more sizeable and a fun form). It’s great to see so many people exploring such areas; here’s a detailed summary of resources. Even if you never plan on getting involved, the Flickr area dedicated to such techniques is worth a browse.

- This one amused me: a cloud computing company had a contest that was meant to show off Ruby and cloud computing strengths. It was won by people brute-forcing the problem with GPUs: 16 used by the first-place winner (plus 117 CPU cores, which had less performance total than the 16 GPUs), 4 by the second. Steve Worley and others talk about the GPU approach on the CUDA forum (his program, shared with the community there, was used to win second place).

- I admire the dedication.

The evils of fps

I completely agree with this blog post by Humus on the uselessness of the performance numbers in most rendering papers. This is something that often comes up when reviewing papers. Frames-per-second (fps) numbers are less than useless, since they include extraneous information (the time taken to render parts of the scene not using the technique in question) and make it very difficult to do meaningful comparisons. The performance measurement game developers care about is the time to execute the technique in milliseconds.

Some papers do get it right, for example this one. The authors use milliseconds for detailed performance comparisons, only using fps to show how overall performance varies with camera and light position (which is a rare legitimate use of fps).

7 Things for July 20th

While at SIGGRAPH I like to look at new books at the booths. One you may wish to check out is Graphics Shaders: Theory and Practice, from AK Peters (or just use “Look Inside” on Amazon). I received a review copy and skimmed through it. If you’re interested in programming in GLSL 1.2 (part of OpenGL 2.1), consider looking at this one. A minor problem is that it’s not quite as up-to-date as the Orange Book (now on OpenGL 3.1), but the difference in core concepts between language versions is not large. The Graphics Shaders book is full color and comes with a lot of GLSL code examples. It has a bias towards scientific visualization, though not so much that it neglects the basics. I particularly enjoyed the chapter on noise, as it gave one of the clearest explanations I’ve seen on the differences between various types of basic interactive noise functions. One or two elements in the book are a little weak – the flowcharts for pipelines are often too small and difficult to read, for example – but all in all this looks like a solid contribution to the field. Don’t expect more elaborate effects, e.g., shadows are not touched upon. It does cover the basics, plus some additional topics like image post-processing (not normally covered in texts I’ve seen). One of the authors wrote a nice learning tool for GLSL, glman, free for download. If you find you like this tool, definitely consider the book.

Another book I noticed recently is Fluid Simulation for Computer Graphics. This is a topic I know little about, I was just interested to see that there’s any book at all. It looks pretty equation-filled, so is definitely for the serious practitioner.

Speaking of fluid simulation, Intel has an article on this topic for games. One of the chief strengths of any publication is that its staff makes a decision based on merit as to what is published and what is culled. So, I have to admit to being leery of anything that says, “Sponsored Feature”, as that means editorial review and decision-making are gone. I tend to err on the side of ignoring such articles (there’s plenty to read already). That said, Intel’s had quite a number of these articles recently, including such topics as instancing, ocean fog, FFT’s for image processing, and quite a few on parallelism.

In the “clearing the queue” category of links, I don’t think I ever pointed out this handy page, which presents all AMD/ATI and NVIDIA presentations at GDC 2009.

There’s now a (not very active, but at least it exists) Microsoft DirectX blog.

On the OpenGL front, NVIDIA has introduced bindless graphics to help avoid L2 cache misses. I will be interested to see how APIs evolve, as the elements in the current APIs that are bottlenecks are not so much CPU or GPU limitations as due to the API constructs themselves.

Thing for the day: an advertisement with interesting stippling.

7 Things for July 19th

Seven more:

- Michael Abrash has an in-depth article on rasterization on Larrabee. Perhaps a little too in-depth at times; just skim past the assembly instructions. I also found myself asking, “why do that?” – the key is to just keep reading. He tries to make his examples simple and comprehensible, but at the cost of sometimes feeling like they’re oversolving the problem. They aren’t, it’s just that the solution is in fact used in different circumstances in order to be efficient.

- SIGGRAPH has an interactive rendering event summary page. This page is more for the art production side of things, though; Naty’s course, talks, and production sessions summaries are more comprehensive and more useful for programmer attendees.

- NVIDIA has a number of events they’re involved in at SIGGRAPH 2009. Here’s the list.

- I love this sort of madness: a business-card ray tracer that does depth of field.

- Accumulated SSAO: the idea of reprojection, of using previous results by finding where they lie on this frame’s view, is one that seems a tad expensive for interactive rendering. It’s hard to know anything about performance and quality from this page, but I thought it was interesting to see.

- I mentioned Processing in the last post. Another language-related resource for graphics and game programming is pygame, a set of Python modules for writing games. A friend said he found this system to be pretty great, that he could whip up a fairly involved game idea in a few hours.

- Scribblenauts sounds like the coolest game that will ever come out, period. Even if it’s only 1/10th as good as the previews read, it looks to be pretty darn entertaining.

JGT is now the Journal of Graphics, GPU, and Game Tools

The name change for the journal formerly known as Journal of Graphics Tools was announced today. This does not reflect a change in focus; rather, the new name more accurately reflects the existing focus of the journal. The Journal of Graphics Tools was originally conceived as an ongoing successor to the Graphics Gems book series, and it has since been a great place for practical “nuggets” of graphics tech. Many of the best articles were also collected in a recent book.

I recommend subscribing to this journal, but contributing to it is even better. JGT is an excellent choice for any game developer who would like to publish a bit of tech they have created; while it is a fully peer-reviewed and citable publication, the focus is squarely on practical applications. Authors are not expected to spend a lot of time writing up previous research, summary, conclusion, future work, etc. For more information see the online author’s guide.

In the interests of full disclosure, I should note that all three authors of Real-Time Rendering are on the JGT editorial board.