Andrew Glassner and I are running a fun little workshop called “Freezing Time” this Sunday, as part of Making @ SIGGRAPH. Details: 12:15-1:45 PM, South Hall G – Studio Workstation Area

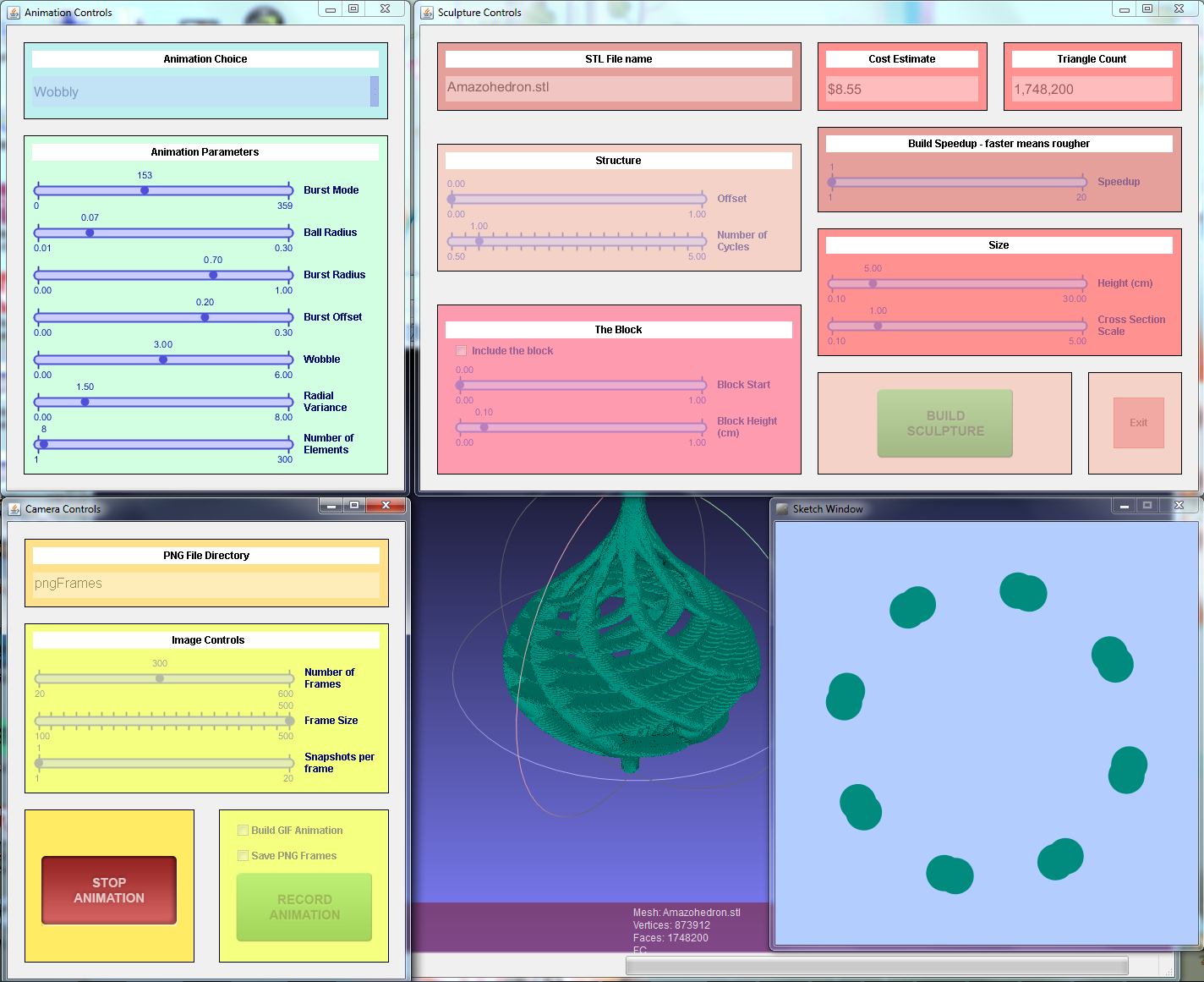

We’ll be teaching how to use T2Z, “Time To Z”, a program that lets you generate a 2D animation and then turn it into a 3D printable sculpture. Participants will be provided workstations, and there will be high-speed 3D printers available after the workshop. Can’t make it? Read on… Can make it? Get the code now and have fun on the plane ride to Los Angeles.

T2Z takes the frames of your animation and stacks them to form a 3D sculpture. This three.js program shows the transition for a number of animations – use the mouse to change the camera’s view. It’ll also get your cooling fan cranking, if your GPU is like mine. Turning “cycles” down to 1 keeps it sane.

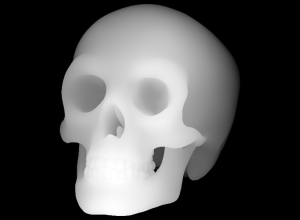

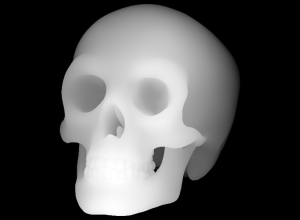

Here’s a simple example. This animation:

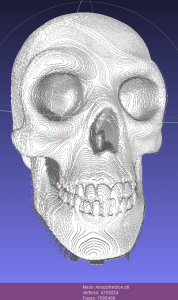

gives this sculpture when you stack the frames:

The (self-imposed) challenge is to create an interesting, looping animation that also creates a visually-pleasing and printable sculpture. This example is pretty good, though the animation doesn’t quite perfectly loop. It would be easy enough to make it loop, but then we lose the base it can sit on. Tricky! If you want to hack on this code, it’s the Wobbly animation in T2Z.

There are many more examples in our gallery. I’ve been playing with the idea of data translation in general; you’ll see some experiments there. It’s been a great excuse for me to learn to use various tools at the local makerspace, Artisan’s Asylum, though I’ve not worked up the courage to actually use the plasma cutter yet. There are also plenty of fun & free tools for data manipulation, such as 123D Make, third-generation Photosynth, Sketchfab, 123D Catch, and on and on.

Even if you can’t attend the workshop, you can easily do this sort of experimentation at home. The T2Z code is free and open source, and well-documented. Companies such as Shapeways give you the ability to print high-quality models. We have lots of little animations in Animations.pde – go mess with them! There are also super-hacky “animations” at the end of this file: AnimatedGifReader turns a GIF into an STL 3D print file, FolderOfFramesReader does the same for a set of PNGs, and HeightField takes a grayscale image and uses the gray as a measure of height, e.g.

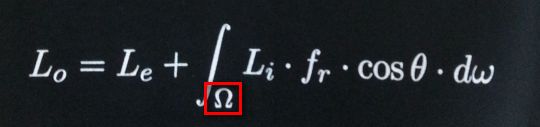

Processing is entirely fun to hack on (Debugger? We don’t need no stinkin’ debugger, println() is our only friend). It’s Java plus stuff to make graphics easy. I like the fact that to run the program you are faced with the code – the system invites you to start poking at the program from the outset. Andrew wrote most of the code, being a Processing pro (he wrote a book and teaches a course in it; the first half of his course is free). Me, I translated the Marching Cubes code to Processing: each pixel of each image is treated as a voxel, the 3D model is from the isosurface formed between the objects and the background.

We hope to see you on Sunday! Or better yet, online, where we hope to see you sending us animations for the gallery and pull requests for code you’ve added.

Where did all this come from? Last year around May Andrew started making a series of looping GIFs using Processing, taking after the Bees & Bombs Tumblr feed. His goal was to make animations worth posting. These can now be found on Andrew’s Tumblr feed. Steve Drucker and I were the critics, over more than half a year.

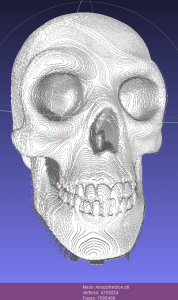

During this time I was attending and organizing 3D printer meetups in the Boston area. Mark Stock pointed out a fascinating way of modeling: instead of explicitly using union operations on 3D models, the traditional CAD approach, he instead deposited objects into a large voxel grid. It’s much simpler and faster to figure out if a voxel is inside some given primitive vs. performing a union or other constructive solid geometry operation on a set of models. For example, computing the union of thousands upon thousands of spheres will bring most CAD modelers to their knees. Voxel in/out functions are trivial to compute for spheres, and Marching Cubes then guarantees a watertight, well-formed model with no geometric singularities, precision problems, etc. 3D printers themselves have limits to precision, so using voxels is a good match. Here’s an example of Mark’s work:

So, for me, these two things combined: animations could be used to define voxels, and Marching Cubes used to generate 3D representations. I made an exceedingly slow GIF to STL converter in Perl and ran a bunch of Andrew’s GIFs through it. A few interesting forms turned up and that got me started on playing with what I call “323,” converting from some three-dimensional form of data (an animation being 2D plus time) to another (a sculpture).

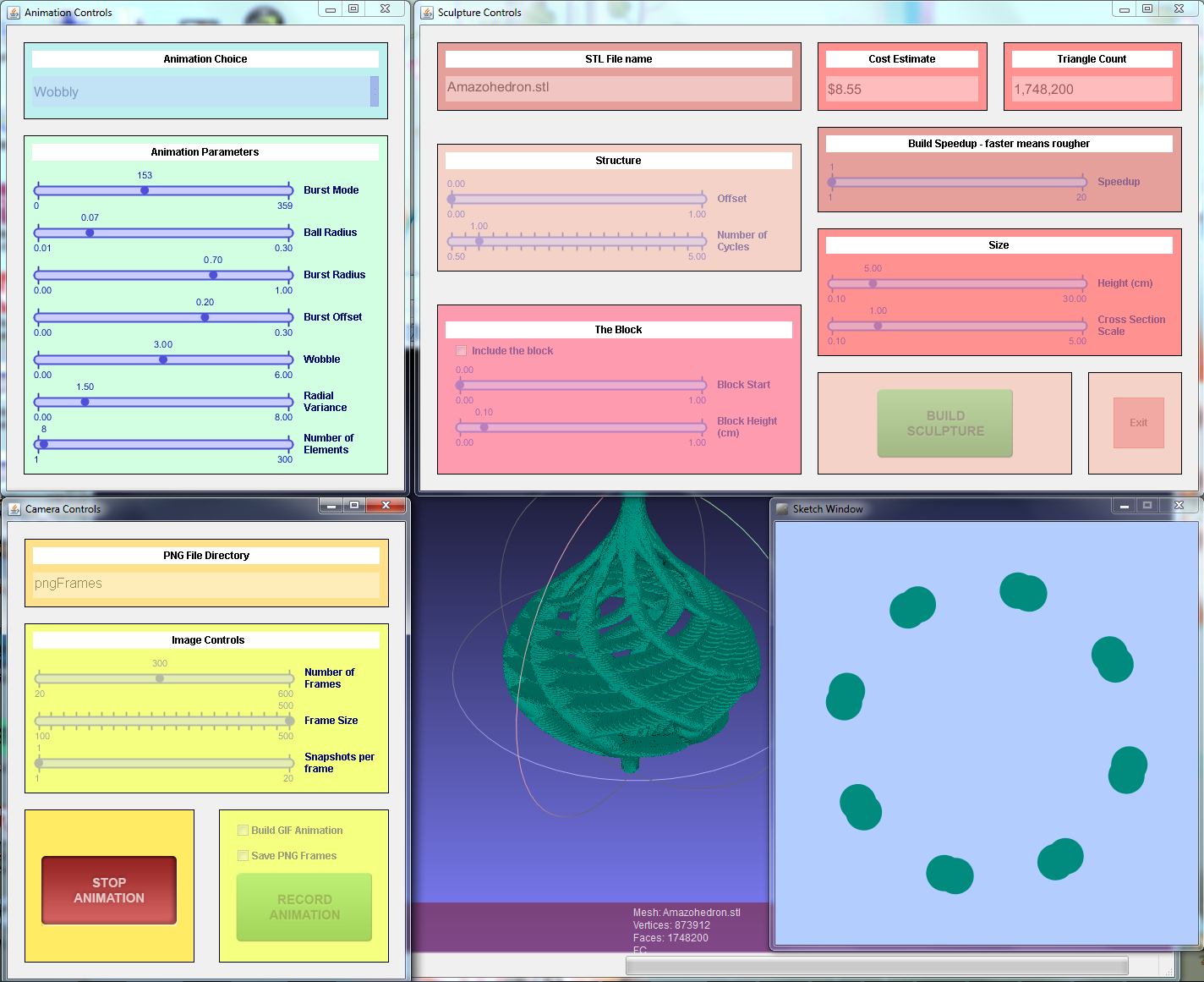

Seeing the call for Making @ SIGGRAPH, we decided to go further and give a workshop on the process. The T2Z program that resulted is massively faster than my original Perl program, generating sculptures in a few seconds. It’s also much more usable, allowing you to make your own animations, hook up sliders to variables, and easily export them as GIFs, a set of PNGs, or a 3D STL model. Programming all this sucked up way more time than expected, and of course was highly addictive. Andrew made this Processing program do things that Nature did not intend (e.g., binary STL output and multi-window UI).

Personally, I find this whole design process entertaining. In idle moments (or at the dentist) I imagine what might make both an interesting animation and a worthwhile sculpture. It’s a fun way to think about modeling and animation, and one where my intuition doesn’t always pay off. The more I play, the more I learn.

Here’s a screenshot, to whet your appetite – click it for the full-size readable version:

So download the thing, install Processing and three little libraries (easy!), and start sliding sliders, pushing buttons, and hacking code! And let us know what you find.

BTW, if you want just one link to bookmark, it’s this: http://bit.ly/t2zspot